AI Software

Aliado RISC-V SDK

Download Aliado RISC-V SDKQuickly and seamlessly develop, debug and fine-tune applications for Semidynamics RISC-V hardware with the Aliado RISC-V SDK. It is a complete software development solution, including a compilation and debugging toolchain, emulators for functional testing of your applications and a highly optimized library of common routines, all integrated into a single Eclipse-based development environment.

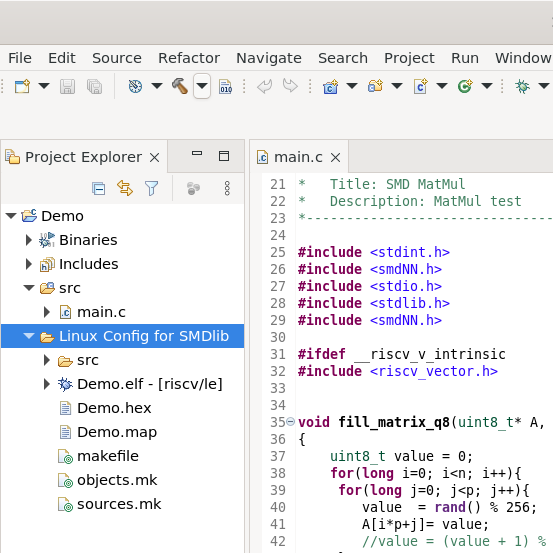

Aliado IDE

Development for RISC-V and Semidynamics hardware is simple with the Aliado IDE. Compatible with Windows WSL or Linux environments, it is a complete solution integrated with all SDK features to enable users to code C and C++ applications with as little friction as possible. Immediately after installation, any user project can be compiled with the Aliado toolchain and run with our Aliado emulators.

Based on Eclipse, it includes all features required for software development optimization including debugging, a memory viewer, a disassembler and assembly viewer, code versioning, and much more. Complete compatibility with the universe of Eclipse plugins further allows users to customize and fine-tune their experience to their workflows.

-

Compatible with Linux and Windows Subsystem for Linux.

-

ASM/C/C++ software development environment.

-

Graphical debugging interface.

-

Integrated serial terminal and semihosting.

-

Integrated Aliado emulators.

-

Integrated cross-compilation toolchain.

Aliado Toolchain

Aliado RISC-V SDK includes a comprehensive toolchain for compiling and debugging code targeting Semidynamics hardware. Semidynamics toolchain supports the two main compilation frameworks in the field, GNU GCC and LLVM/Clang, providing a common and familiar environment for users, with the full set of tools available for users including a debugger, linker and assembler. The compiler supports all the features expected of a modern compiler including code optimization and auto-vectorization. The toolchain is compatible with any IDE of your choice and is also seamlessly integrated in the Aliado IDE for a complete development solution.

-

Compatible with Linux and Windows Subsystem for Linux.

-

C/C++ software development environment.

-

Supports GCC and LLVM.

-

Auto-vectorization.

Aliado Kernel Library

The Aliado Kernel Library was created to most efficiently leverage the performance of the Semidynamics RISC-V hardware. It is a collection of functions operating on multi-dimensional data that have been maximally optimized for performance. With a particular focus on AI, where a model’s individual operations are called Kernels, a large number of crucial operations like Matrix multiplications, transpositions, activation functions, and more, have been optimized for Semidynamics hardware, enabling quick development of efficient AI applications.

-

Leverages Semidynamics Vector Unit.

-

Leverages Semidynamics Tensor unit.

-

Designed for multi-dimensional data.

-

Mathematical library.

-

Neural Network Library.

-

Data transformation.

-

Helper Functions.

Aliado Emulators

The Aliado RISC-V SDK includes the QEMU and SPIKE emulators that enables executing your code in your current Linux environment or even emulating baremetal executions. With complete support for RISC-V and Aliado extensions, you can optimize and fine-tune your code quickly and ensure it is validated before ever needing silicon, derisking your development process.

-

User mode for bare-metal executions.

-

Semi-hosting for lightweight emulation without needing a virtual OS.

-

Up to 1 billion instructions per second for QEMU and up 100 million instructions per second for Spike.

-

Full support for all RISC-V extensions.

-

Full support for Semidynamics instructions.

SMD ONNX-Runtime

Download SMD ONNX-RuntimeBy leveraging ONNX, Semidynamics hardware is ready to run thousands of models available in the ONNX format in common repositories like HuggingFace, or the ONNX Model Zoo. Using the Semidynamics ONNX-Runtime, you’ll be able to integrate any of these models out of the box in Semidynamics hardware. The Microsoft led ONNX project defines a universal format for AI models and ONNX-RT enables any ONNX model to be executed in a diversity of hardware. Leveraging our Kernel Library, ONNX-RT is provided with support for Semidynamics hardware with Vector and Tensor units for the most efficient execution possible of AI applications.

AI Models

Model ZooSemidynamics is actively researching and developing the AI space and have therefore curated a set of AI models that we use as technology demonstrators. You can access these models in the ONNX format in our Model Zoo.

Aliado Quantization Recommender

Download Aliado RecommenderThe Aliado Quantization Recommender is a helper tool to provide guidance on users on how best to quantize their models. It'll evaluate any ONNX model and provide a recommendation to the best quantization method for the desired quantization bit-level. The Aliado Quantization Recommender is provided as a user-friendly tool to guide end-users in their path in the complex world of AI mode quantization.

-

Focuses your work only on the method that works for you.

-

Supports all model types including Transformer models.

-

Takes into account model sensitivity, defined by the user and the target datatype which would determine the type of quantization.

-

Specific quantization techniques if calibration data is available.

-

Recommendation support for int4, int3 and int2 datatypes.

-

Transparent score based system, which helps to visualize the recommendations in a hierarchical way.